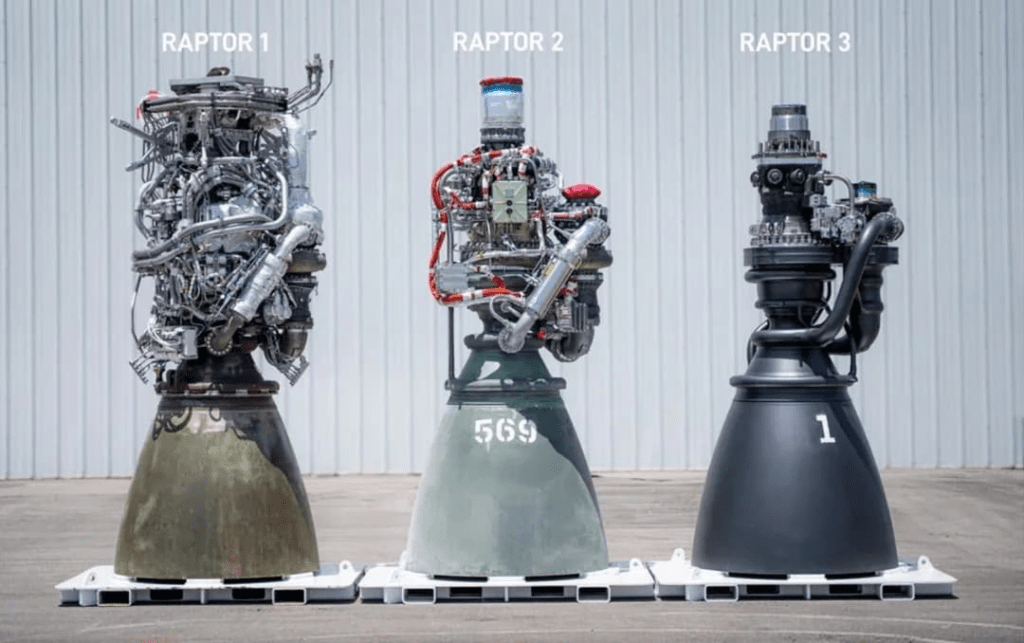

As I’m typing this, I’m thinking of that image of the evolution of the Raptor engine, where it evolved in simplicity:

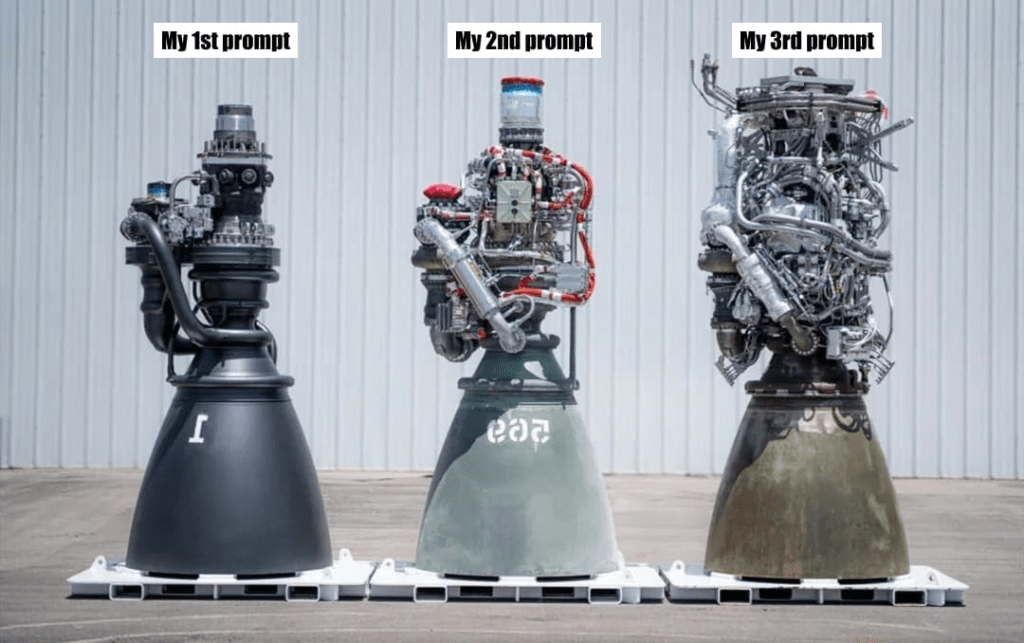

This stands in contrast to my working with LLMs, which often wants more and more context from me to get to a generative solution:

I know, I know. There’s probably a false equivalence here. This entire post started as a note and I just kept going. This post itself needs further thought and simplification. But that’ll have to come in a subsequent post, otherwise this never gets published lol.

Source: Just a Little More Context Bro, I Promise, and It’ll Fix Everything - Jim Nielsen’s Blog

I've heard this line of argument before. I think Jim's more self aware than most that there's a false equivalence here. Let me do my best to enunciate it for my own sake:

Adding more context is the mental equivalent of learning more about a problem space. When one learns more about the problem space, often that narrows the solution space. This allows you to often simplify the solution.

The raptor engine example here is absolutely great - because the path to a simplified engine is through an exponential increase in context of the requirements of the engine over time. It's crazier when you think that our brains capture high bandwidth context across senses, while the context for an LLM mostly consists of more text (often as a stand-in to denser modalities).

However, they both achieve the same thing - narrow the solution space.

I do think there's something that Jim's alluding to here though. The human brain retains the relevant parts of the context over time, often abstracting the inferences and learning to build knowledge. An LLM lacks that - today. I also don't see any fundamental research in the horizon that changes that. This is the reason why LLMs are totally unlike humans. A child grows their knowledge. An LLM just - is. Smart people world over are trying to change that and augment - let's see how that all pans out.