More experiments with local models and kv cache quantization

Toying around with the KV cache quantization parameters in llama.cpp. The smaller-model tests held up on the agent setup. I’ve found success with both Qwen3.6-35B-A3B and gemma-4-26B-A4B models.

Here are my current llama.cpp server settings. Hardware: M1 Max Mac Studio with 64GB RAM.

Gemma 4:

llama-server \

-hf unsloth/gemma-4-26B-A4B-it-GGUF:UD-Q5_K_XL \

--n-gpu-layers -1 \

-c 262144 \

-n 65536 \

-ctk q4_0 \

-ctv q4_0 \

-t 8 \

--flash-attn on \

--no-context-shift \

--temp 0.6 \

--top-p 0.95 \

--top-k 20 \

--reasoning-budget 8192 \

--repeat-penalty 1.00 \

--presence-penalty 0.00 \

--batch-size 2048 \

--ubatch-size 1024 \

--mlock \

--chat-template-kwargs '{"preserve_thinking": true}'

Substitute unsloth/gemma-4-26B-A4B-it-GGUF:UD-Q5_K_XL with unsloth/Qwen3.6-35B-A3B-it-GGUF:UD-Q5_K_XL for the Qwen3.6 model.

Lessons learned

- Q5_K_XL quantization is a good middle ground to save VRAM / memory

- kv cache quantization comes with a small performance penalty

- I am hitting the limits of the context window size being 256K. I dug into that next.

Context overhead minimization

codex, gemini, claude code all have giant context windows - upwards of a million tokens and run on the cloud. So until now, I didn’t optimize my prompts and skills for token efficiency.

Turns out, gpt and gemini models are fantastic at analyzing the context hierarchy and suggesting where there are redundancies. The big unlock, apart from pithy writing, is selective loading and breaking the various big context files into smaller chunks that are loaded on-demand.

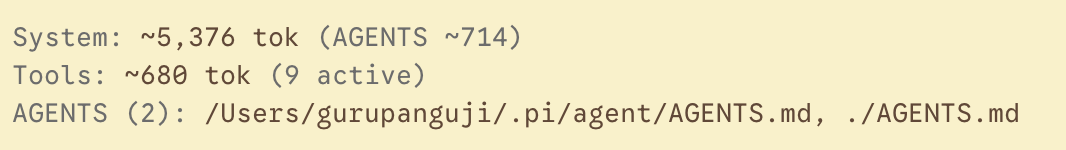

Since the optimizations, default context is now down to <6000 tokens. This is 6x more than the default pi that is about 1000 tokens. Yet, it’s half of that of claude code and codex and gemini defaults. Those specific harnesses are fine with the large context windows running on cloud. However, when running locally, any context overhad is a bottleneck.

Bringing GEMINI.md along

The other learning was that instead of “duplicating” every thing from AGENTS.md to GEMINI.md, add a simple snippet to GEMINI.md that references AGENTS.md as follows:

# GEMINI.md

Follow `AGENTS.md` for repo-specific rules.

Overall, this is a much cleaner setup that’s now working across pi as well as all the other harnesses.